TLDR: Deterministic networking is a fundamental shift that gives data centers explicit control over traffic paths, moving beyond the limitations of best-effort routing like BGP to meet the specific performance and consistency demands of modern workloads.

Modern applications place demands on networks that traditional routing systems were never designed to meet. Distributed databases replicate data across regions. Machine learning pipelines move massive datasets between storage and compute clusters. Real time services require consistent latency across multiple infrastructure environments.

Despite these requirements, the networks connecting data centers and cloud environments still operate largely on best effort routing.

The Border Gateway Protocol determines how traffic moves across networks by exchanging route advertisements between autonomous systems. This mechanism ensures reachability across the internet, but it provides limited control over how traffic actually travels between endpoints.

For modern data center infrastructure, reachability alone is no longer sufficient. Applications increasingly require deterministic connectivity, where infrastructure can control the exact path traffic takes between workloads.

Deterministic data paths represent a fundamental shift in how networks operate. Instead of relying on indirect routing policies, infrastructure platforms can explicitly select the routes used for communication.

This change allows data centers to treat networking with the same level of control they already apply to compute and storage.

Traditional internet routing relies on route advertisement.

Each network announces the IP prefixes it can reach. Routers exchange these announcements and construct routing tables. When a packet arrives, the router selects the best available route according to BGP policies.

The key characteristic of this system is indirect control.

Operators influence routing behavior by adjusting advertisements or routing policies, but they rarely define the precise path that traffic will follow across networks.

In practice, multiple independent networks participate in determining the final route.

A packet traveling between two data centers may pass through several autonomous systems. Each network along the path applies its own routing preferences. The resulting route may not reflect the priorities of the originating data center or the application generating the traffic.

Deterministic networking reverses this model.

Instead of advertising reachability and allowing intermediate networks to determine the route, the originating system can explicitly define the path between endpoints.

Path selection becomes a direct decision rather than an indirect outcome of distributed routing policies.

This shift enables infrastructure teams to build networks that behave predictably and align with application requirements.

Deterministic networking introduces explicit control over how traffic flows between workloads.

Rather than relying on global routing tables, connectivity is established between specific endpoints using policies that define the desired path characteristics.

Several core principles define deterministic networking.

First, connectivity operates at the workload level rather than at the network prefix level. Services communicate directly with other services rather than relying on network wide route advertisements.

Second, routing decisions become policy driven. Operators or applications can specify constraints such as latency requirements, bandwidth availability, geographic location, or trusted network providers.

Third, network paths become observable and predictable. Infrastructure teams can understand exactly how traffic moves through the network.

This model aligns networking with how modern infrastructure already manages other resources.

When a developer deploys a workload, they often specify compute requirements, storage characteristics, and geographic placement. Deterministic networking extends that level of control to network connectivity.

Applications can request the type of connectivity they require rather than accepting whatever path the global routing system happens to provide.

Many modern workloads depend heavily on network performance.

Small variations in latency or bandwidth can significantly affect application behavior. Deterministic path selection allows data centers to tailor network behavior to the needs of specific workloads.

Several examples illustrate why this capability matters.

Database replication often occurs between multiple regions to provide resilience and disaster recovery.

Replication traffic must travel quickly and reliably between nodes. If routing changes introduce latency spikes or congestion, replication lag can increase, which may affect application performance or data consistency.

Deterministic paths allow operators to route replication traffic through the most reliable and lowest latency networks available.

Machine learning workloads frequently involve large clusters of GPU servers exchanging enormous datasets.

Training pipelines may transfer terabytes of data between storage systems and compute clusters. Network throughput becomes a critical factor in overall training performance.

Deterministic routing allows data centers to select high bandwidth paths for these transfers, ensuring that network performance does not become the bottleneck.

Applications such as financial trading platforms, multiplayer gaming infrastructure, and real time analytics systems depend on extremely consistent latency.

Unpredictable routing changes can introduce jitter or delays that degrade the user experience.

By controlling traffic paths directly, operators can ensure that these services use the lowest latency routes available.

Data centers often replicate storage across multiple sites to maintain redundancy.

Large backup operations may move massive volumes of data between facilities. These transfers benefit from predictable bandwidth allocation and optimized routing.

Deterministic networking allows storage traffic to use routes that maximize throughput without interfering with latency sensitive workloads.

Beyond improving application performance, deterministic networking also simplifies operations.

When network paths are explicitly defined, infrastructure teams gain greater visibility into how traffic flows through the system.

This clarity reduces the difficulty of troubleshooting performance issues.

Instead of analyzing complex routing policies across multiple networks, operators can directly observe the path used by a particular connection.

Deterministic networking also reduces reliance on indirect traffic engineering techniques.

Traditional approaches often involve adjusting routing advertisements or manipulating network metrics in an attempt to influence path selection. These techniques can be difficult to predict and maintain.

Explicit path selection eliminates much of this guesswork.

Another operational benefit is improved planning.

When network behavior becomes predictable, teams can design infrastructure architectures with greater confidence. They can allocate bandwidth resources more effectively and ensure that critical workloads receive appropriate network capacity.

This predictability becomes especially valuable as data center environments scale to support thousands of services across multiple locations.

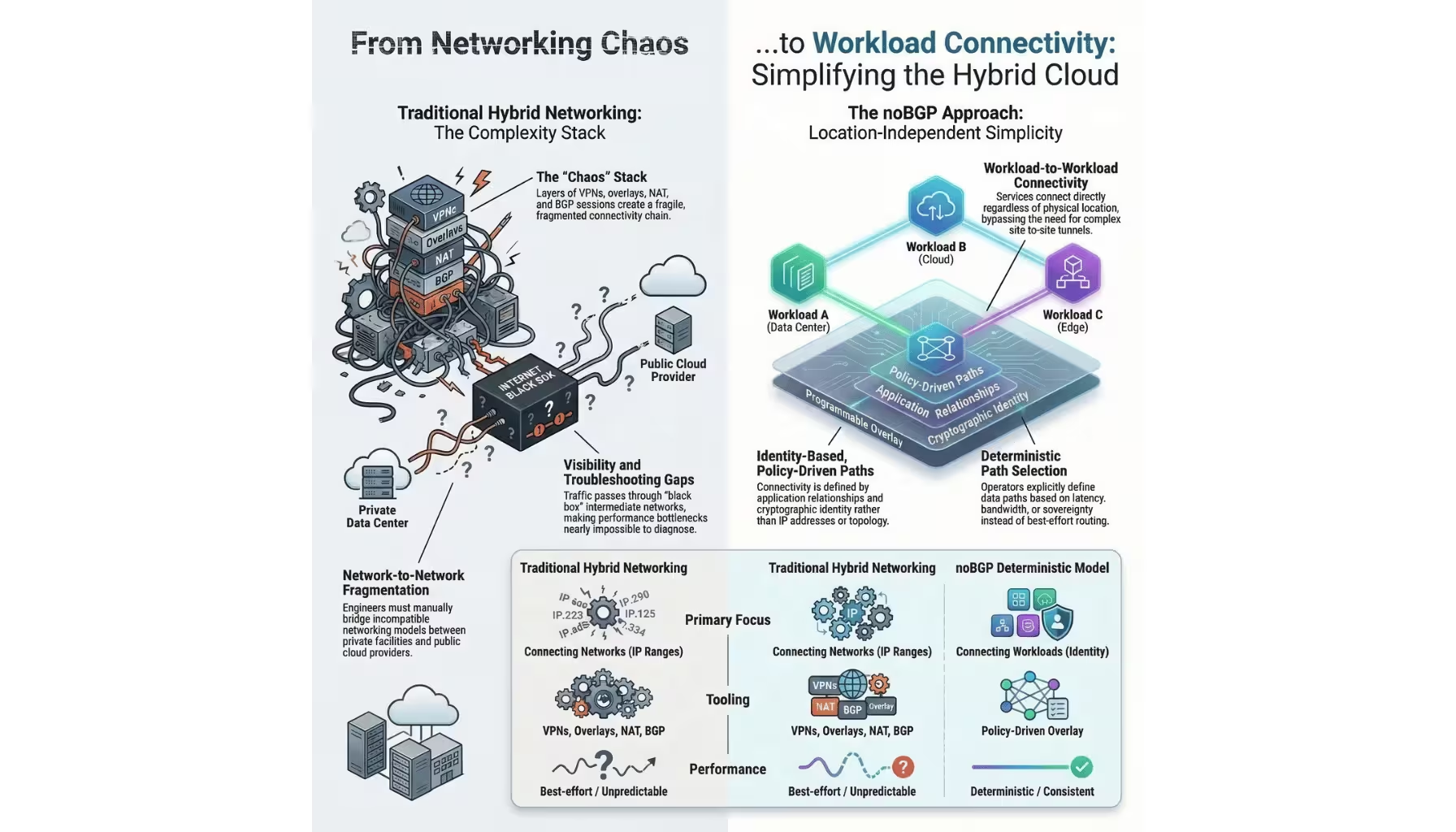

noBGP introduces a networking model built around workload connectivity rather than route advertisement.

Instead of exchanging global routing information between networks, noBGP establishes direct, authenticated connections between workloads. These connections form a programmable network overlay that operates independently of traditional internet routing behavior.

Within this model, connectivity policies define how traffic should flow between endpoints.

Operators can specify criteria such as preferred network paths, latency constraints, bandwidth availability, or geographic restrictions. The platform then selects routes that satisfy these policies.

Because connections occur between identified workloads rather than IP address ranges, infrastructure teams gain finer control over network behavior.

Each connection becomes a deliberate decision rather than a side effect of routing table propagation.

The result is a network that behaves more like modern application infrastructure. Connectivity becomes programmable, observable, and aligned with the needs of distributed systems.

Data centers can build predictable networking environments without relying on the indirect mechanisms of legacy internet routing.

Deterministic data paths provide the foundation for a new networking model, but modern infrastructure extends beyond a single facility.

Applications now operate across multiple data centers, cloud providers, and edge environments. Connecting these environments introduces another layer of complexity.

In the next article, we will explore how deterministic workload connectivity simplifies hybrid infrastructure networking and enables seamless communication between data centers, clouds, and edge deployments.