TLDR: The reliance on BGP in modern, distributed data centers (cloud, edge, on-prem) is failing latency-sensitive workloads because it only guarantees reachability, not deterministic (predictable/optimal) paths. The industry needs to transition from BGP's global routing to a workload-centric, deterministic connectivity model.

Modern data centers power the digital infrastructure behind nearly every service people rely on. Cloud platforms, AI training clusters, financial systems, streaming platforms, and enterprise applications all depend on highly distributed infrastructure operating across multiple locations.

Yet the networking foundation connecting these systems still relies on technology designed more than three decades ago.

The Border Gateway Protocol, commonly called BGP, remains the primary routing system that determines how data travels across the Internet and between networks. It has proven resilient and scalable, but it was built for a very different world. The internet it was designed for had far fewer networks, simpler traffic patterns, and much less operational complexity.

Modern data centers operate in a completely different environment. Applications now span regions, clouds, and edge locations. Workloads depend on predictable performance and secure connectivity. Infrastructure teams must manage increasingly complex traffic flows between thousands of systems.

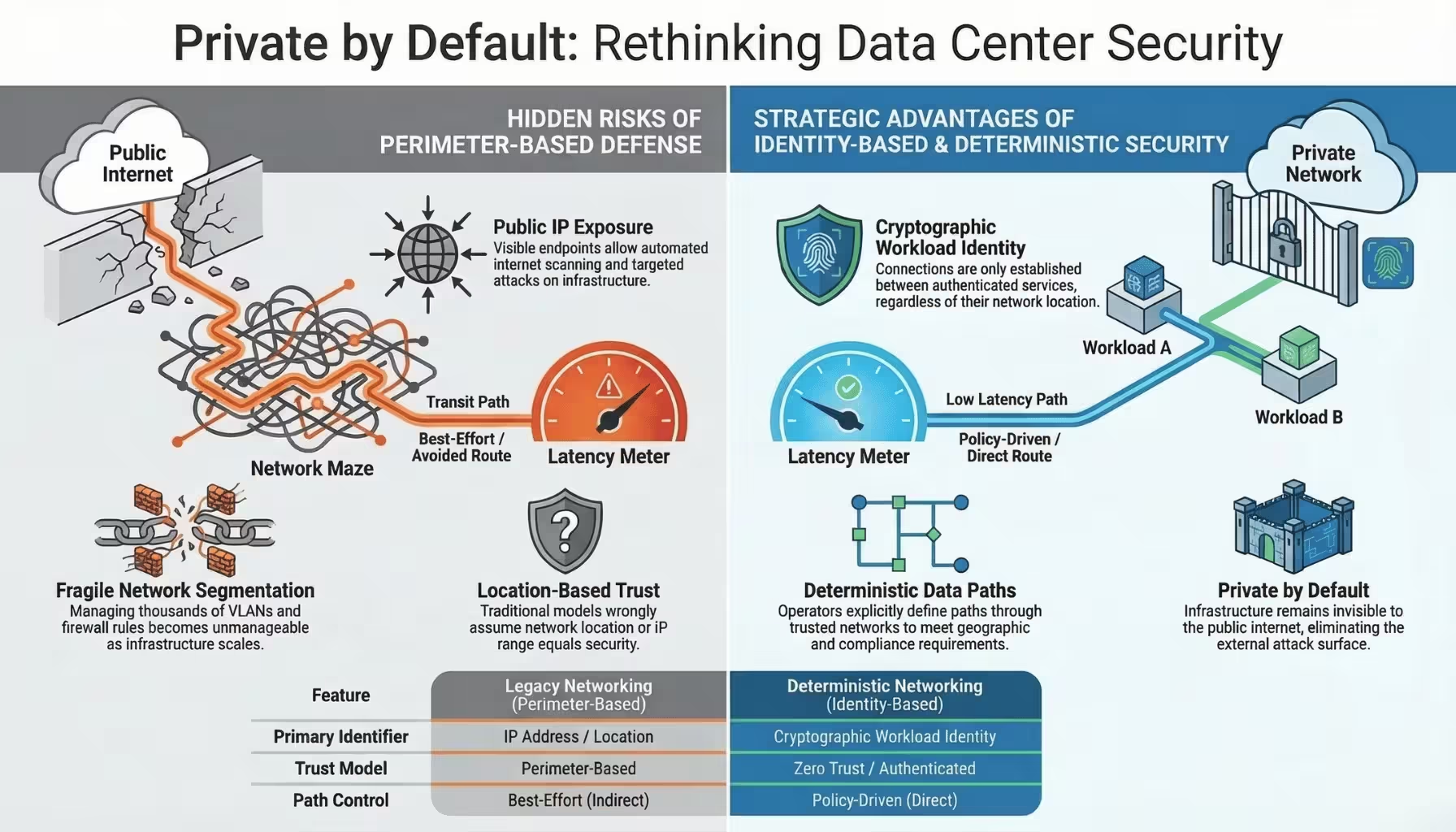

This shift exposes a fundamental limitation. BGP focuses on network reachability, not on deterministic control of how data moves between workloads.

As a result, data center operators often lack visibility and control over the paths their traffic actually takes.

Understanding this gap helps explain why the complexity of networking continues to increase in modern infrastructure.

BGP was created in the late 1980s to allow independent networks to exchange routing information across the growing Internet.

Each network, known as an autonomous system, advertises the IP address ranges it can reach. Routers exchange these advertisements and build routing tables that determine where packets should be forwarded.

The goal of BGP is simple. It ensures that any network connected to the Internet can reach any other network.

The protocol was designed around several key principles.

First, each network controls its own routing policies. Second, routing decisions are based primarily on reachability rather than performance. Third, routing information propagates gradually through the global network.

These characteristics enabled the internet to scale rapidly while remaining decentralized.

However, they also introduced important limitations.

BGP does not guarantee optimal paths for latency, bandwidth, or reliability. Instead, it selects routes based on policy decisions made by autonomous systems along the path.

The result is a routing system that prioritizes connectivity and independence rather than predictable performance.

For early internet services, this approach worked well.

For modern distributed applications, it often does not.

Traditional data centers once operated as relatively contained environments. Applications ran within a single facility or within a small number of closely connected locations.

Today, infrastructure looks very different.

Applications increasingly span multiple environments simultaneously.

Organizations commonly operate workloads across:

These environments exchange large volumes of traffic. Databases replicate data between regions. Microservices communicate across clusters. AI training systems move massive datasets between storage and compute nodes.

This architecture creates highly dynamic traffic patterns that continuously move between networks.

At the same time, many applications require strict performance guarantees.

Financial platforms require extremely low latency. AI clusters require high-throughput connectivity. Real-time analytics systems depend on predictable response times.

In this environment, networking becomes a critical component of application performance.

Yet the routing system responsible for moving traffic between networks still operates on best effort path selection.

When traffic leaves a data center and travels across external networks, operators often lose control over its path.

BGP determines the route using policies defined by multiple networks along the path. These policies may prioritize cost, business relationships, or operational constraints rather than application performance.

As a result, packets may travel through paths that are far from optimal.

Several challenges emerge from this model.

Traffic between two data centers may take different routes at different times. Even when the source and destination remain the same, routing policies across the internet can shift.

This unpredictability makes it difficult to guarantee performance.

Routing decisions do not necessarily prioritize the lowest latency path. Traffic may travel through intermediate networks that introduce additional delay.

For latency-sensitive workloads, even small variations can affect performance.

Operators often attempt to influence routing behavior through techniques such as route announcements, traffic shaping, or selective peering.

These methods provide indirect influence but rarely provide precise control over traffic paths.

When network failures occur, BGP requires time to propagate routing updates across the network. During this period, traffic may follow degraded or unstable paths.

This delay can increase application downtime or performance degradation.

These limitations are not flaws in the protocol. They reflect BGP's design goals. The protocol prioritizes global reachability across independent networks rather than deterministic path selection.

However, modern data center workloads increasingly require more precise control.

As infrastructure becomes more distributed, network teams must build additional layers of configuration to compensate for the limitations of traditional routing.

Common techniques include:

Each layer adds operational overhead.

Troubleshooting connectivity problems often requires investigating multiple systems simultaneously. Engineers may need to analyze routing tables, firewall rules, peering relationships, and application behavior to identify the root cause of a performance issue.

In many cases, operators cannot directly observe or control the network segments between environments.

This complexity consumes engineering time and slows infrastructure deployments.

As applications become more distributed, these challenges only increase.

Modern infrastructure demands a networking model that aligns with how distributed systems actually operate.

Applications require more than simple reachability. They require predictable performance, controlled data paths, and secure communication between workloads.

A deterministic networking model addresses these requirements.

In a deterministic model, infrastructure can explicitly define the path traffic should take between workloads. Instead of relying on indirect routing policies, operators and applications can specify routing behavior based on measurable criteria such as latency, bandwidth, or trust boundaries.

This approach provides several advantages.

It allows operators to ensure that critical traffic follows optimal paths. It simplifies troubleshooting by making network behavior more predictable. It also improves security by allowing traffic to flow only through trusted networks.

Deterministic connectivity shifts networking from a global routing problem to a workload connectivity problem.

Instead of relying on autonomous systems to determine packet paths, infrastructure platforms can establish direct, policy-driven connectivity between services.

This architectural shift represents the next step in the evolution of data center networking.

In the next article, we will explore how deterministic data paths work and why they form the foundation of modern infrastructure networking.