TLDR: Modern infrastructure is highly distributed across data centers, clouds, and the edge, demanding a new approach to networking that avoids the complexity of traditional routing methods.

Modern infrastructure no longer lives in a single data center. Organizations now operate applications across a combination of private facilities, multiple cloud providers, regional disaster recovery environments, and edge locations.

This shift has fundamentally changed how networks must operate.

Applications regularly exchange data between environments that were never designed to work together seamlessly. A database cluster may replicate between two private data centers while application services run in multiple public clouds. Edge systems may collect data and send it back to centralized processing pipelines. AI training workloads may pull datasets from storage systems located in entirely different networks.

Each of these connections introduces complexity.

The challenge facing many data center operators today is not simply routing traffic inside their own facilities. The real challenge lies in connecting distributed infrastructure environments that each operate with different networking models.

As organizations add more locations and services, the traditional approach to hybrid connectivity becomes increasingly difficult to manage.

Most modern organizations operate infrastructure across several distinct environments.

Private data centers remain important for many workloads, particularly those involving sensitive data, high performance computing, or specialized hardware. At the same time, public cloud platforms provide flexible compute capacity and global reach.

Edge deployments extend infrastructure even further. Content delivery networks, regional compute nodes, and localized processing systems allow applications to operate closer to users and data sources.

A typical enterprise infrastructure environment may include:

These environments must communicate constantly.

Application tiers often span multiple locations. A front end service may run in one cloud provider while backend processing runs in a private data center. Data may replicate between regions for resilience or compliance. Analytics pipelines may gather information from edge systems and process it centrally.

Networking becomes the connective tissue linking all of these components.

However, each environment introduces its own networking model, configuration tools, and routing mechanisms.

To connect distributed environments, organizations typically build layers of networking infrastructure that attempt to bridge incompatible systems.

The resulting architecture often includes a mix of technologies such as:

Site to site virtual private networks that connect data centers to cloud environments.

Overlay networking systems that create virtual networks across multiple infrastructure platforms.

Network address translation to manage overlapping address spaces.

Border Gateway Protocol sessions between environments to exchange routing information.

Provider specific networking constructs inside cloud platforms.

Each layer solves a specific connectivity problem, but together they create a complex operational stack.

For example, a service running in one cloud region may communicate with a database cluster located in a private data center through a chain of systems that includes cloud networking infrastructure, encrypted tunnels, routing policies, and external transit providers.

Troubleshooting performance problems in this environment often requires examining multiple layers of infrastructure simultaneously.

The architecture becomes fragile as complexity grows.

Small configuration errors can disrupt connectivity between environments. Routing policies may interact in unexpected ways. Performance bottlenecks may appear in segments of the network that operators cannot directly observe.

As organizations expand their infrastructure footprint, these challenges multiply.

Hybrid connectivity introduces both technical and operational challenges.

One of the most common problems is fragmentation.

Each environment uses its own networking model. Data center networks rely on traditional routing protocols and internal switching architectures. Cloud providers expose networking through platform specific abstractions. Edge systems may use lightweight networking stacks optimized for limited resources.

Infrastructure teams must learn and manage all of these models simultaneously.

This fragmentation slows deployment and increases operational overhead.

Network engineers spend significant time configuring connectivity between environments. Each new service may require updates to routing policies, firewall rules, and network address translation systems.

Troubleshooting also becomes more difficult.

When a connectivity issue occurs between environments, engineers must investigate multiple systems to determine the root cause. The problem may lie in cloud networking configuration, external routing behavior, encryption tunnels, or intermediate transit networks.

Visibility into these layers is often incomplete.

Performance problems can become especially difficult to diagnose. Traffic may travel through several networks before reaching its destination. Each segment of the path may introduce latency, congestion, or packet loss.

Without clear visibility into the full path, operators often rely on indirect measurements to understand network behavior.

As infrastructure continues to grow, these operational burdens increase.

A more effective approach to hybrid networking begins by shifting the focus from infrastructure networks to workloads.

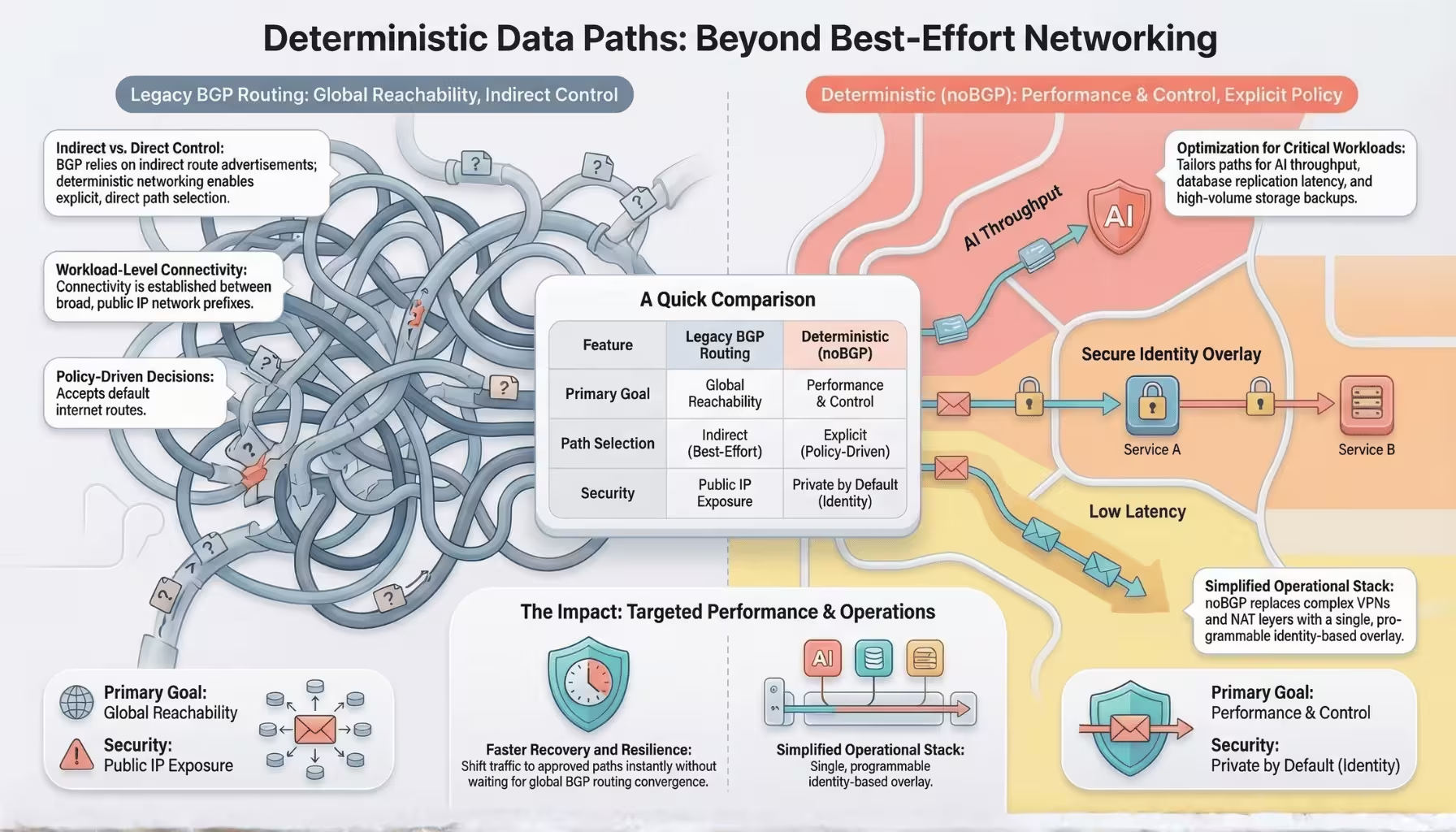

Traditional networking connects networks to other networks. Routing protocols exchange reachability information for entire address ranges, and traffic flows according to those global routing decisions.

Modern applications operate at a much finer level of granularity.

Services communicate directly with other services. Containers connect to databases. Analytics systems exchange data with storage clusters. Individual components form highly dynamic relationships that do not map cleanly to network level routing policies.

noBGP approaches hybrid connectivity from the perspective of workload relationships rather than network topology.

Instead of connecting entire networks together, noBGP establishes secure connectivity between workloads regardless of where they run.

A service running in a private data center can communicate directly with another service running in a cloud environment or edge location without requiring traditional network level routing integration between those environments.

Connectivity becomes defined by application relationships rather than by infrastructure boundaries.

This model simplifies hybrid networking significantly.

Infrastructure location becomes less important because connectivity operates at the workload level. Applications can communicate across environments without requiring complex routing configurations or extensive network integration.

Operators can define connectivity policies that control how traffic flows between workloads while maintaining a consistent networking model across all environments.

Deterministic workload connectivity enables simpler architectures for many common distributed systems.

Consider a database cluster that spans two data centers and a cloud environment.

In traditional networking models, operators must configure site to site tunnels, manage routing policies between locations, and ensure that firewall rules permit replication traffic across each environment.

With workload level connectivity, database nodes establish direct connections to one another regardless of their physical location. Replication traffic follows policies that prioritize reliable and low latency paths.

Another example involves application tiers distributed across environments.

A web front end may run in multiple cloud regions to serve global users. Backend processing services may run in private data centers where sensitive data resides. Analytics pipelines may operate in specialized compute clusters.

Instead of building complex network integrations between these environments, each service connects directly to the components it depends on.

Edge deployments benefit from the same model.

Regional systems collecting data from sensors or users can send information directly to centralized processing pipelines without requiring extensive network integration between edge infrastructure and core data centers.

In each case, connectivity reflects application relationships rather than network topology.

As infrastructure continues to expand across clouds, data centers, and edge environments, networking must evolve to support this distribution.

Traditional routing systems were designed for a world where networks connected other networks. Modern infrastructure requires a model where workloads connect directly to other workloads regardless of location.

Deterministic connectivity enables this shift.

By establishing secure, policy driven connections between services, platforms such as noBGP remove much of the complexity associated with hybrid networking. Infrastructure teams gain a consistent connectivity model that works across environments without requiring extensive routing configuration.

Applications can communicate freely across distributed infrastructure while operators maintain visibility and control over network behavior.

In the next article, we will examine how this connectivity model improves security by enabling private infrastructure and identity based communication between workloads.